Building Voice Recognition Software App

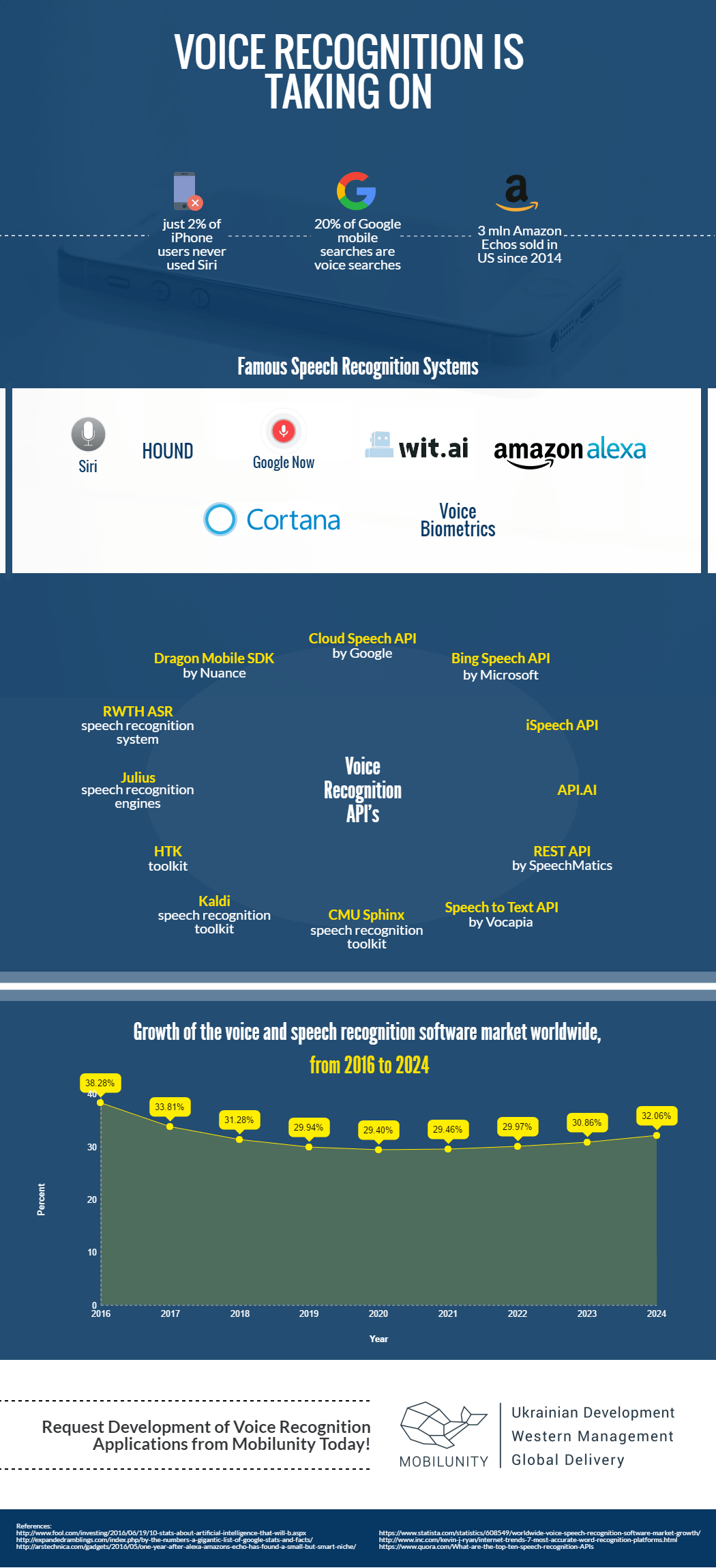

Studies on human speech recognition have evolved since the mid of the 20th century. The 1990s witnessed advent of the huge speech recognition companies, that created such projects as the Sphinx-II system, Dragon Dictate, and VAL. Today, anyone can try out voice recognition technologies, as they function in almost every sphere of our life, ranging from common digital assistants to biometric authentication techniques in banking and to speech recognizers built into the weaponry in the military forces. Among the latest well-known voice recognition products for daily usage is assistant Siri for iPhone owners, smart speaker Alexa or Amazon Echo developed by Amazon.com, voice biometrics by Nuance, and Google Voice Search.

Development of voice and speech recognition technologies

Speaker-Dependent Voice Recognition Applications

There are currently two approaches to building speech or voice recognition applications and systems. The first one so-called template matching approach results in the delivery of speaker-dependent systems. Such systems can recognize voice of only one person and demand prior training. The system identifies only certain sound patterns, which the user teaches the program while repeating phrases or commands a few times. The system then defines the average of the repeated samples of the phrases pronounced, which in turn becomes a template. After the “training” phase of the system, it can recognize the meaning of the phrases, that only fit the predefined templates.

Speaker-Independent Voice Recognition Applications

The second technique of speech recognisers creation is the feature analysis approach. Speaker-independent applications are built in course of development of such type. The advantage of systems like these is their ability to recognize the voice of multiple speakers, and no need of their preliminary training. The voice input is processed via “linear predictive coding (LPC) or “Fourier transforms”, and then the system finds characteristic similarities between the expected voice input and the actual phrase pronounced by the user. This approach has one more benefit – unlike speaker-dependent systems the independent ones can deal with the accent, pitch, volume, speed, etc. which differ from person to person.

How We Worked on the App with Voice Recognition Technology

Mobilunity always tries to keep up with the latest trends in the world of high technologies and IT. From the video recognition to Android nfc application development as well as video overlay app development we always take an enormous interest in voice recognition system development and other application development technologies. We’ve been requested completion of a complex project – voice recognition software app – so our team did profound research on the ways of implementation of the speech recognition features. Our cooperation with a customer is currently on a discovery stage. We are looking for solutions and existing voice recognition technologies, and studying experience of the well-known systems, the key feature of which is voice recognition.

When building a voice recognition software app, the expertise of telemedicine app developers and the implementation of configuration management DevOps practices can ensure the development of a robust and secure application that meets the demanding requirements of modern voice-based technologies. For now, we’ve settled on a feature analysis approach to development. The best solution for voice recognition application of the type is to create custom artificial neural network (ANN) and perform voice recognition based on existing API’s right from this network. The digitalization of the voice input is going to be done with the help of openSMILE(ANN)-lib. Synchronization of devices such as cell phone and smartwatch will be done via the implementation of a distributed function system. Though the very development hasn’t started yet, speech recognition interface design, created by our team, has already been approved by the customer. We hope to take a few steps closer to the invention of perfectly working speech recognition technologies!

Create innovative web and mobile apps using human speech recognition technology with Mobilunity!

Disclaimer: All salaries and prices mentioned within the article are approximate numbers based on the research done by our in-house Marketing Research Team. Please use these numbers as a reference for comparison only. Feel free to use the contact form to inquire on the specific cost of the talent according to your vacancy requirements and chosen model of engagement.